- #Install apache spark standalone install#

- #Install apache spark standalone driver#

- #Install apache spark standalone code#

If you do not do this, you would end up with exception such as FileNotFoundException or Invalid Input File Path Exception.

#Install apache spark standalone driver#

NOTE: While executing “docker-compose up” command, you may get the error such as “ Invalid Volume Specification“. spark-master:Ĭommand: bin/spark-class .master.Master -h spark-masterĬommand: bin/spark-class .worker.Worker spark://spark-master:7077

#Install apache spark standalone code#

# -header "Cookie: oraclelicense=accept-securebackup-cookie " $ | tar -xz -C /usr/local/Īccessing Spark Worker Node WebUI while running on Dockerįollowing is the code for docker-compose.

#Install apache spark standalone install#

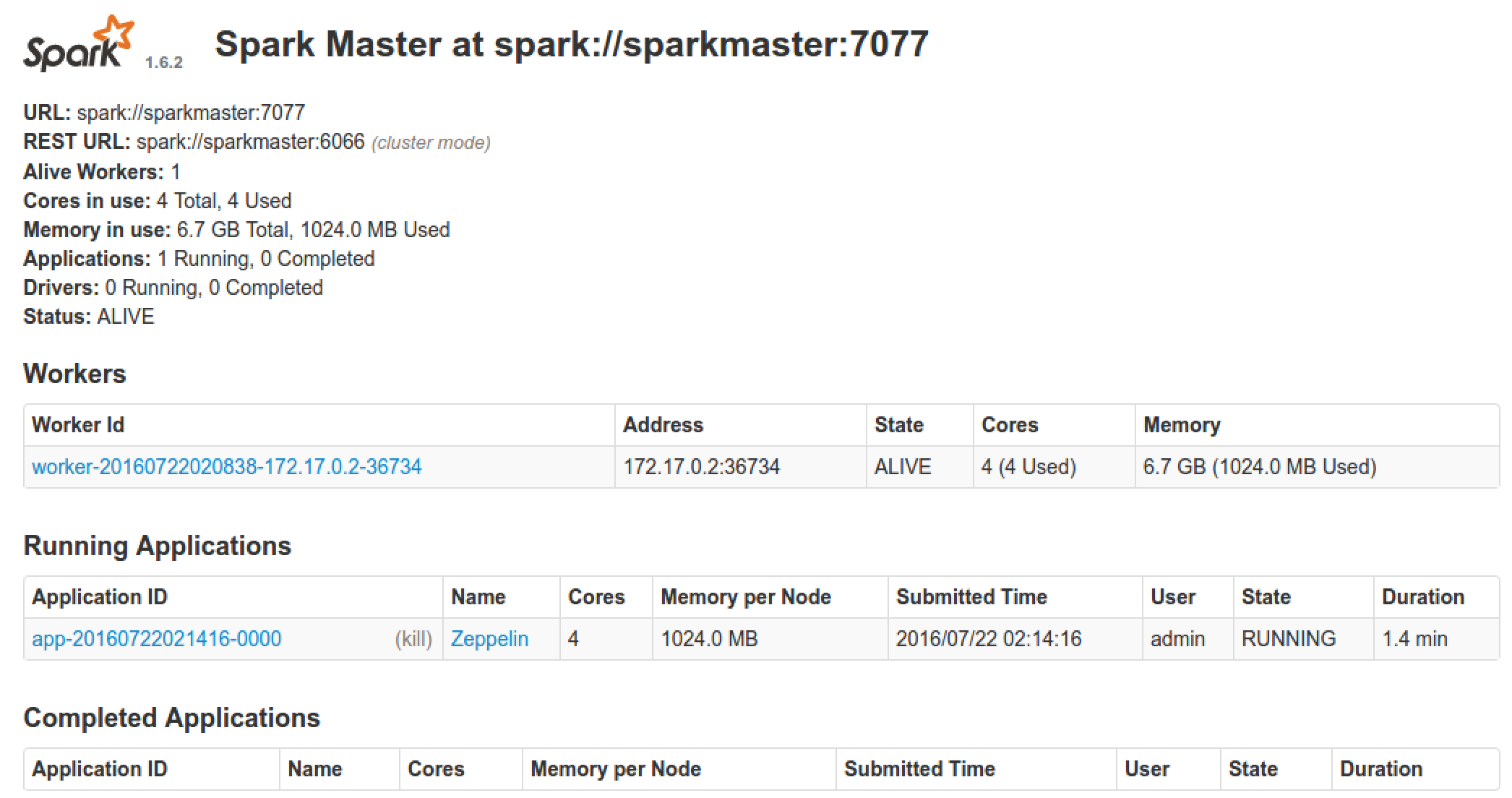

RUN apt-get -y install software-properties-commonĮcho oracle-java8-installer shared/accepted-oracle-license-v1-1 select true | debconf-set-selections & \Īdd-apt-repository -y ppa:webupd8team/java & \Īpt-get install -y oracle-java8-installer & \ Once completed, execute the command “docker images” to make sure you have got the image setup with name as “spark”.If you chose to use different tag name, make sure to change the image name in docker-compose file as well. Make a note that the image is tagged as “spark” and this is what is referenced in the docker-compose file whose code sample is presented later in this article. Execute the command such as “docker build -f spark.df -t spark. Go to the folder where you have saved the above mentioned spark.df file.Note some of the following for setting up the image. That said, following different cluster managers are supported: In order to access the WebUI for driver program (spark-shell) running in worker node, one may need to add port such as “4041:4040” under worker node entry in docker-compose file and access using the URL as 192.168.99.100:4041. In cluster mode, however, the driver is launched from one of the Worker processes inside the cluster, and the client process exits as soon as it fulfills its responsibility of submitting the application without waiting for the application to finish. As a matter of fact, in client mode, the driver is launched in the same process as the client that submits the application. In that case, one could start the driver program (SparkContext) in either master or worker node by command such as spark-shell –master spark://192.168.99.100:7077.

One could also run and test the cluster setup with just two containers, one for master and another for worker node.

In the diagram below, it is shown that three docker containers are used, one for driver program, another for hosting cluster manager (master) and the last one for worker program. Spark Standalone Cluster Setup with Docker Containers